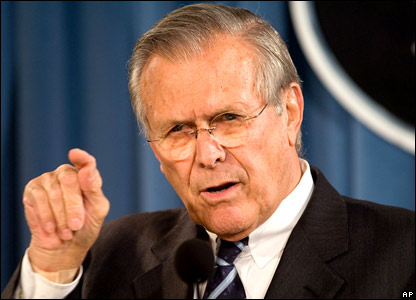

[caption id="attachment_705” align="alignleft” width="283” caption="“as we know, there are known knowns; there are things we know we know. We also know there are known unknowns; that is to say we know there are some things we do not know. But there are also unknown unknowns – the ones we don’t know we don’t know.” US Defense Secretary Donald Rumsfeld, February 12, 2002”] [/caption]

[/caption]

I’ve been a long time reader of the blog “Messy Matters” (which invokes terrible images now that I am potty training a toddler). The authors, Sharad Goel and Daniel Reeves are academics who work in the Microeconomics and Social Systems (get it, MESS?!?) lab funded by Yahoo!. (What does Strunk and White say about punctuation after a proper noun which includes punctuation as part of the proper noun?) Anyhow, the Messy Matters blog had a very interesting post recently about testing to see if you are overconfident. The gist is this: take a test and try to not answer each question exactly but give an upper and lower bound which you think represents a 90% confidence band around the right answer. If you haven’t seen this done, you should go take and look and then read the rest of this blog post.

I didn’t do worth a shit on their “overconfidence” test. I think I got 5 of the ranges right. The other 5 times the real answer fell outside my bounds. As I was answering the questions I had this strong feeling of not being confident at all. I was very tempted to answer HUGE ranges on some of the questions because I felt totally unable to make a good guess. But I took a swag and tried to put in big ranges, but not TOO big, if I didn’t know the answer. I’m not the only one who struggled with this test. In their summary of results I fall in the 76th percentile. Hey, I’m above average… or at least above the median. Clearly I didn’t know how wrong I was in many cases. But does this mean I am “overconfident”? I don’t think so. I think this means something a bit more subtle. This exercise reminded me of creating a forecasting model and trying to predict values far outside the training data.

Having read the book _On Intelligence _I am convinced that one of the main functions of the human brain (or at least the prefrontal cortex) is to be a pattern matching machine. We all build little mental models in our head all the time. And these models are trained, by definition, on the situations which we run into day in and day out. And these models are VERY accurate around the mean (i.e. around the experiences we are used to having). For example, how small of a piece of sand can you feel between your teeth? Our brains have a ‘model’ of what it normally feels like when our teeth close against each other. The slightest unexpected disruption in that pattern triggers our brain to notice. Ever miss a step when walking down stairs? When did you know you were in trouble? Probably when your foot was about 2 inches past where you expected the next step to be. You didn’t have to wait for your face to hit the railing before your mental model of step walking was throwing warning bells. Us humans are freaking amazing mental model makers!

Well we’re amazing… except when we suck. When we suck is when we are faced with trying to predict something that is orders of magnitude outside our experience. The question on the MESS test which I struggled the most was the question about how much an empty 747 weighs. I don’t ever deal with massive weights. Ever. I only had two reference points which I could think up: 1) my first car was a ‘69 Cadillac which I know weighed 5,040 lbs. We used to call it “Two and a half tons of fun.” and 2) a hopper bottom rail car carries ~3500 bushels of corn which is ~ 196,000 lbs. And I’ve never been up next to a 747. But they are HUGE. I’ve seen pictures of the space shuttle riding around on the back of one of those bad boys. But they have to be pretty light relative to their volume because they have a lot of cargo room. And then I did the math on how many 1969 Cadillacs = 1 rail car of corn… almost 39!?!? But rail cars on not that big. I’ve climbed up on rail cars of grain. Kinda seems like it should be about 10 Cadillacs big. At that point I was pretty perplexed and just guessed a range which turned out to be WAAAY too high. It turns out that a 747 weighs around 360,000 lbs, which is less than 2 rail cars of corn (not including the actual cars, just the weight of the corn!). My intuition, as trained by my two data points, didn’t do worth a tinker’s damn at guessing the weight of airplanes.

But here’s the whole point of that last paragraph: If a human has no reference points and no experience with a domain, we (or at least me) can’t make good guesses and, more importantly, we can’t know how bad our guesses are! **We CAN’T know how much we suck! **If you think in terms of distributions, this exercise is akin to having a very small sample size and trying to guess the distribution’s second moment (the standard deviation). Well shit, we know in practice that if we have small samples the mean has a big error term but the standard deviation has an even BIGGER error term.

So simply put, providing confidence bands around a guess which is out of my area of experience is really hard and I’m not good at it. The biggest problem is knowing when I’m out of my domain. In both _The Black Swan _and Fooled by Randomness, Nassim Nicholas Taleb points out that the single strongest predictor for how bad someone is going to do at _the confidence band game _is if they hold a PhD. If anyone has a reference on the study he refers to, I’d love to see it. I’m resisting the temptation to throw stones at both actuaries and finance quants right here. And if I didn’t live in a glass house, I would!

My take away from all this is that confidence bands around a guess should **not **be expected to be statistically accurate. That’s the very nature of not knowing something at all. We don’t even know what we don’t know (thank you Donald Rumsfeld). The very definition of an expert might be someone who, if they don’t know the exact answer, can at least put confidence bands around their guess. In other words, you have to have some level of knowledge to put accurate confidence bands around a guess. And failing to be able to do that is not necessarily overconfidence. It might just be ignorance.